Assessment Questions

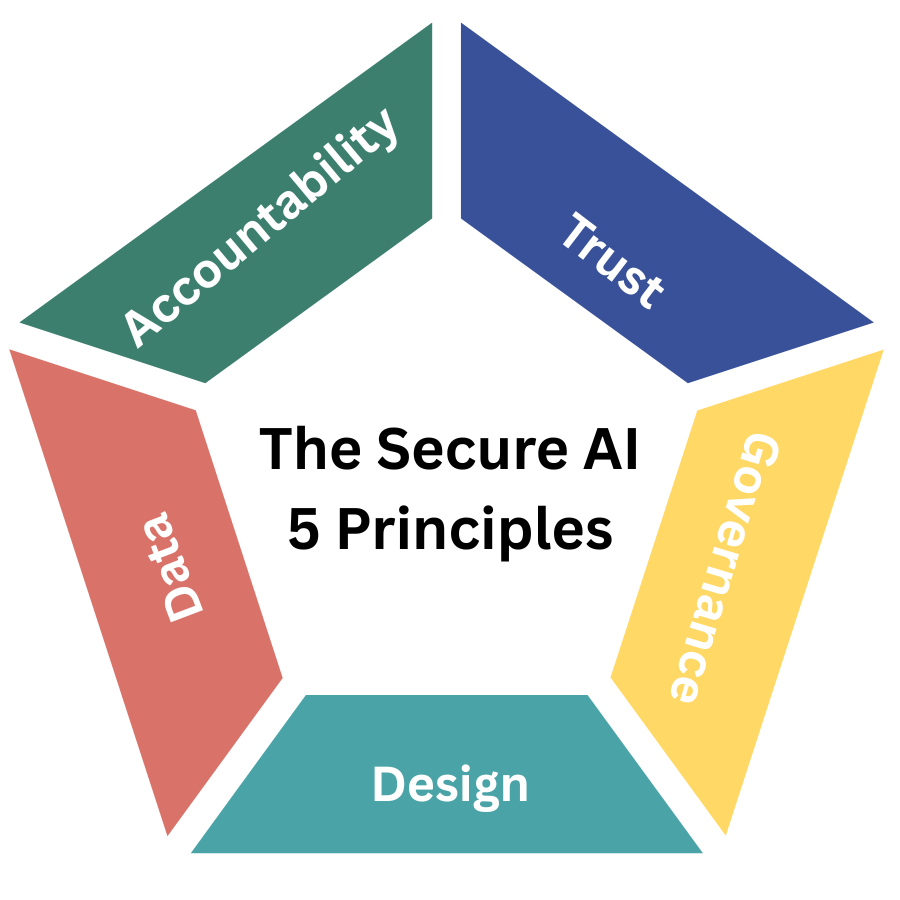

Trust Is the New Perimeter 5 questions

Trust Is the New Perimeter 5 questions

Do we have a defined and measurable framework for AI trust and assurance across all business units?

Are external stakeholders (customers, regulators, partners) confident in our AI transparency and fairness?

Is independent validation or red-teaming used for our most critical AI systems?

Have we set clear thresholds for when an AI system is considered "untrustworthy" or high-risk?

Can non-technical leaders easily understand how key AI decisions are made and justified?

Governance Must Move Faster Than Innovation 5 questions

Governance Must Move Faster Than Innovation 5 questions

Does our governance model keep pace with the speed of AI development and deployment?

Is there a named executive or committee accountable for approving all high-impact AI use cases?

Are AI risks formally integrated into our enterprise risk, compliance, and audit reporting?

Have we defined "responsible AI" in measurable business terms rather than as a policy statement?

Does an AI governance council exist with authority to delay or halt initiatives when risks exceed thresholds?

Security by Design, Not by Audit 5 questions

Security by Design, Not by Audit 5 questions

Are AI security, privacy, and ethics embedded into the design process rather than added later?

Are cross-functional teams (risk, legal, security) involved early in AI product design decisions?

Are performance incentives aligned to build safe AI systems, not just fast ones?

Do we systematically capture and act on lessons learned from AI incidents or failures?

Are human override and safe fallback mechanisms built into AI experiences?

Data Is the Weakest Link 5 questions

Data Is the Weakest Link 5 questions

Do we have full visibility into where our data originates and how it is used in AI models?

Is data quality, bias, and leakage recognized as a core AI risk in our organization?

Are data storage and processing decisions aligned with jurisdictional and sovereignty requirements?

Have we assessed reputational risks related to the use of customer or employee data in model training?

Is data lineage and integrity reporting visible at the same level as financial or operational metrics?

Accountability Cannot Be Delegated 5 questions

Accountability Cannot Be Delegated 5 questions

Does every major AI system have a named executive accountable for its operation and outcomes?

Do we have clear escalation and ownership processes for AI-related incidents or harm?

Are we tracking both business value and social or ethical impact of AI systems?

Is accountability for AI performance and integrity embedded in leadership objectives and culture?

Could we confidently explain to regulators, auditors, or the public who approved each major AI system and why?